The Hidden Cost of Managing Market Data Feeds In-House

Every Feed Is a Risk You're Choosing to Own

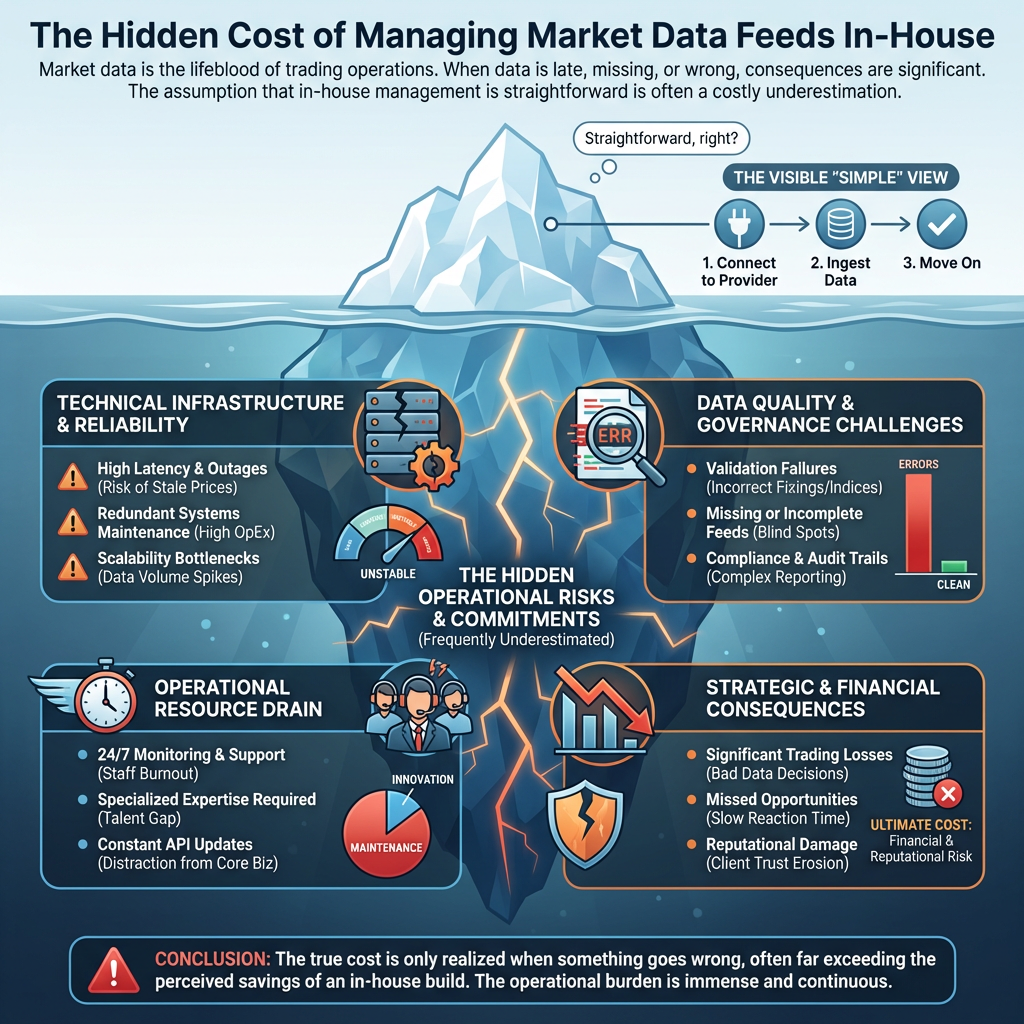

Many organisations assume that managing market data feeds in-house is straightforward: connect to a provider, ingest the data, move on. In practice, it is one of the more demanding operational commitments a data or technology team can take on. This post sets out the risks that are frequently underestimated.

Market data is the lifeblood of energy, commodities, and financial trading operations. Prices, curves, fixings, and indices flow into risk systems, trading platforms, and reporting tools every day, and when that data is late, missing, or wrong, the consequences can be significant.

Late Data

The Silent Killer of Downstream Processes

Market data providers publish on schedules, but those schedules are not guarantees. Energy fixings, commodity settlements, and financial benchmark rates can arrive minutes or hours late - and without active monitoring, downstream systems simply don't know.

The danger is not just the delay itself. It is the silent propagation of that delay into risk calculations, curve builds, and P&L reports. A forward curve built on yesterday's settlement because today's hasn't arrived yet looks correct. It isn't.

Managing this properly requires:

- Automated monitoring of expected publication times per feed

- Alerting when data hasn't arrived within tolerance

- Fallback logic or human escalation procedures

- Audit trails showing when data was received versus when it was expected

Building and maintaining this infrastructure is a non-trivial engineering investment - and it needs to work reliably, every day, for every feed.

Missing Data

Knowing What Should Have Arrived

A related but distinct problem: not all missing data looks missing.

A provider might publish a file that contains 47 of the 48 instruments you depend on. Your ingestion pipeline processes it successfully - no errors, no alerts. But one fixing is absent. It might be a public holiday in a market you don't track closely. It might be a provider error. It might be a change in their publication format that dropped a field silently.

You only know something is missing if you know what to expect. This requires maintaining a data specification - a definition of what each feed should contain, at what granularity, on which publication days - and validating every publication against it.

Most in-house implementations do not have this. They validate that data arrived; they do not validate that the right data arrived.

Badly Formed Data

Format Changes and Silent Corruption

Data providers change their formats. Sometimes they announce it. Often they don't, or the notice arrives too late for a comfortable implementation window.

Common issues include:

- Column reordering in CSV or Excel publications

- Encoding changes producing garbled characters in instrument names or descriptions

- Decimal separator changes in continental European publications

- New fields added that shift positional parsing

- Schema changes in API responses that break downstream deserialisers

- Timestamp format changes that cause silent timezone errors

The most dangerous failures are the ones that produce plausible-looking bad data rather than an obvious error. A price that is off by a factor of 100 due to a decimal separator change will pass most basic validation checks. A timestamp parsed in the wrong timezone will be wrong by exactly one hour - which is easy to miss until it matters.

Maintaining robust, defensive parsing for dozens of feeds - and catching changes quickly when they occur - requires dedicated attention that most in-house teams cannot sustain alongside their other responsibilities.

Revision Handling

The Integrity of Your Historical Record

Many market data providers revise their published data. Settlement prices are corrected. Fixings are restated. Historical series are backdated.

For organisations where historical time series underpin model calibration, back-testing, or regulatory reporting, this matters enormously. A historical dataset that does not reflect applied revisions is not the same dataset the provider published - and if that gap is discovered during an audit or a model review, explaining it is uncomfortable.

Proper revision handling requires:

- Detecting when a provider has republished historical data

- Applying corrections to the stored time series

- Maintaining a record of what changed, when, and why

- Preserving the original publication for audit purposes where required

This is not a feature that gets added later. It needs to be designed into the data architecture from the start.

Vendor Relationship Management

The Operational Overhead Nobody Budgets For

When a data provider publishes incorrect data, someone needs to identify it, raise a support ticket, follow it up, and apply the correction when it arrives. When a provider changes their API without notice, someone needs to debug the failure, engage their technical support team, and implement a fix - usually under time pressure because downstream systems are already broken.

This is a specialist activity. It requires:

- Knowledge of each provider's support processes and escalation paths

- Understanding of the data well enough to identify when something is wrong

- The relationship capital to get issues resolved quickly

- The time to do it, even when it happens at 06:00 on a Monday

For organisations managing ten, twenty, or thirty feeds, this can consume a meaningful proportion of a skilled engineer or analyst's time - time that is rarely accounted for in the original business case for bringing data management in-house.

Regulatory and Audit Exposure

In energy and commodities markets, data provenance and integrity are increasingly subject to regulatory scrutiny. Being able to demonstrate that the prices used in a trade booking, a risk calculation, or a regulatory report were sourced accurately, arrived on time, and have not been altered without record is no longer just good practice - in many jurisdictions, it is an obligation.

An in-house data management solution needs to provide:

- Full audit trails of data receipt, processing, and storage

- Evidence of validation procedures and their outcomes

- Records of any manual interventions or corrections

- Clear lineage from raw provider data to derived outputs

Building this auditability into a homegrown system - and keeping it current as regulations evolve - is a significant and ongoing investment.

The Scaling Problem

In-house market data management tends to work reasonably well at small scale. Ten feeds, a handful of providers, a team that knows the quirks of each source - manageable.

The problems compound as scope grows. Each new feed adds monitoring requirements, validation logic, format-specific parsing, and vendor relationships. The maintenance burden grows faster than the feed count, because feeds interact: a curve that depends on three underlying fixings now has three potential failure modes instead of one.

Organisations that start with ten feeds and grow to thirty often find that what was once a manageable side activity has become a significant operational dependency - without the infrastructure, tooling, or headcount to support it properly.

The true cost is only realised when something goes wrong, often far exceeding the perceived savings of an in-house build.

The Alternative

Outsourcing to a Specialist

Companies like OpenDataDSL exist because this operational burden is substantial enough that it makes more sense, for most organisations, to hand it to a specialist.

A managed market data service provides:

- Operational monitoring across all feeds, every day, with defined alerting and escalation

- Validation against expected publication specifications, not just successful ingestion

- Format resilience built from experience across dozens of providers and years of format changes

- Revision management applied systematically and recorded for audit

- Vendor relationships owned and managed by people who do this every day

- Audit-ready data lineage from source to storage

The question is not whether in-house market data management is possible. It is whether it is the best use of your team's time and your organisation's capital - and whether the risk of getting it wrong is one you want to own.

For most organisations, the honest answer is no.

Interested in discussing what a migration from your current platform could look like? Get in touch with the OpenDataDSL team - we're happy to start with a conversation, not a pitch.

Get in touch with the OpenDataDSL team today

OpenDataDSL is a modern, cloud-native data management platform built for energy and commodities markets. From market data ingestion to ETRM integration, OpenDataDSL gives trading organisations the control, visibility, and flexibility they need to compete.

Fill out the form below, we will contact you to arrange a personally tailored demo.

How about a demo?

Our team is here to find the right solution for you, contact us to see this in action.

Fill out your details below and somebody will be in contact with you very shortly.